Unless you’re copying the contents of an entire book and pasting it into your conversation with an AI chatbot, or asking your chatbot to write a detailed report on every species of fungi (it’s in the millions), chances are you’ve never run into issues of a too-long prompt.

But there’s a limit to how much information a chatbot can take in and spit out at once. That limit depends on the LLM’s context window (or context length): the number of tokens that the large language model (LLM) or large multimodal model (LMM) can process at once, including the input prompt and generated output.

Larger context windows are increasingly important in developing LLMs and LMMs for a number of reasons. But there are tradeoffs to allowing for lengthier inputs and outputs. Let’s dig in.

Table of contents:

LLMs: A quick recap

Before we get into the finer details of context windows, it’s helpful to have a rough understanding of how the latest AI models work. For a detailed explanation, check out Zapier’s article on how ChatGPT works (the underlying principles are similar across LLMs). But for now, here’s what you need to know.

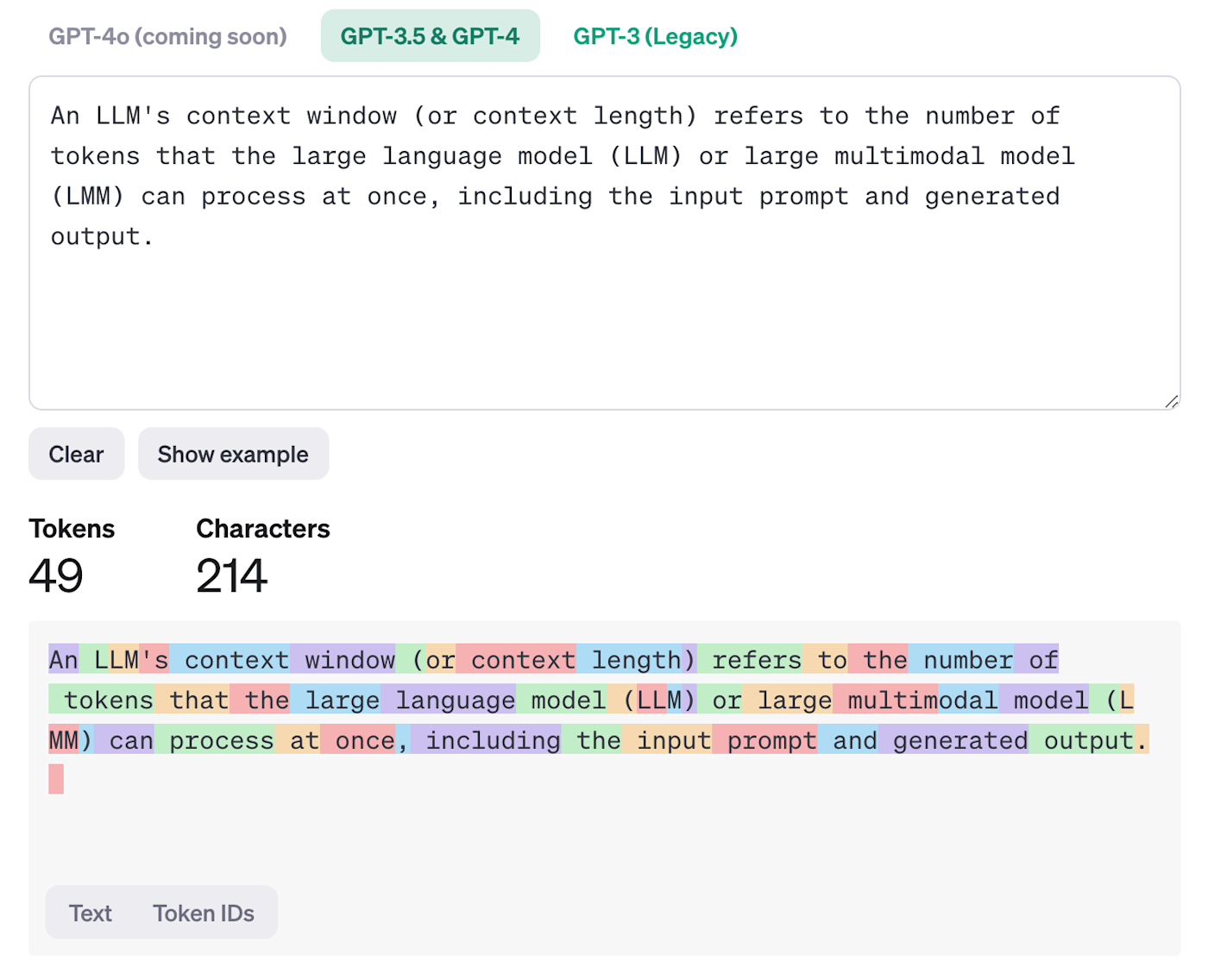

LLMs break text down into “tokens,” with each token being equal to roughly four characters. Each token is individually encoded within an LLM’s neural network and corresponds to a specific concept, word, or part of a word. To get a better idea of how a piece of text gets tokenized by an LLM, you can play around with OpenAI’s tokenizer (as shown in the image below).

LLMs then generate an output by taking a text input, breaking it down into its corresponding tokens, processing any relevant data from its training sources and information included in the prompt, and returning what it considers the most likely follow-on tokens.

With all that out of the way, let’s look at the context window.

What is a context window in AI?

A context window in AI is the maximum number of tokens an LLM can process in one go. While that sounds simple, things get messy when you consider that the LLM has to use the context window to keep track of both the input and output.

Things get even messier when you start to deploy LLMs in the real world. Consider ChatGPT. Its most powerful model, GPT-4o, has a maximum content window of 128,000 tokens. But you can’t just put the full text of a novel into ChatGPT and expect to have a conversation about it.

Here’s why: In addition to your prompt, ChatGPT also has to process all sorts of other information—all within its maximum content window:

-

Instructions from OpenAI (the company behind ChatGPT)

-

Any default directives you’ve set up

-

Your conversation history

-

The contents of any attached files or websites it’s looked up

Then you have to take into account that different ChatGPT users have access to different GPT models with different context lengths. For example, one version of GPT-4o has a context window of 8,192 tokens, while another has a maximum of 32,768 tokens (which version ChatGPT uses depends on things like demand and the plan you’re on).

On the other hand, if you use the GPT-4o API, you can access the full context window (128,000 tokens) and use it to do something specific with the text of your novel. In that case, you might be able to fit everything you need into the context window.

LLM context windows at a glance

To give you a better idea of the numbers, here’s a quick overview of how many tokens the most popular LLMs can process. Keep in mind: chances are these numbers will have increased by the time you read this.

Gemini context window

Gemini 1.5 Pro has a context window of up to 1 million tokens. But developers can access up to 2 million tokens via Google AI Studio and Google Vertex AI or by using Zapier with the same tools.

GPT-4o context window

GPT-4o has a context window of 128,000 tokens—much lower than Gemini’s but still probably more than you could ever need.

Claude context window

Claude used to be the leader in context length—200,000 tokens via its most recent model, Claude 3.5 Sonnet. But Gemini’s recent advances have blown it out of the water.

Llama context window

Llama 3 has a smaller context window of 8,000 tokens, but Meta (the company behind the LLM) has said they’re planning to increase that as part of upcoming developments.

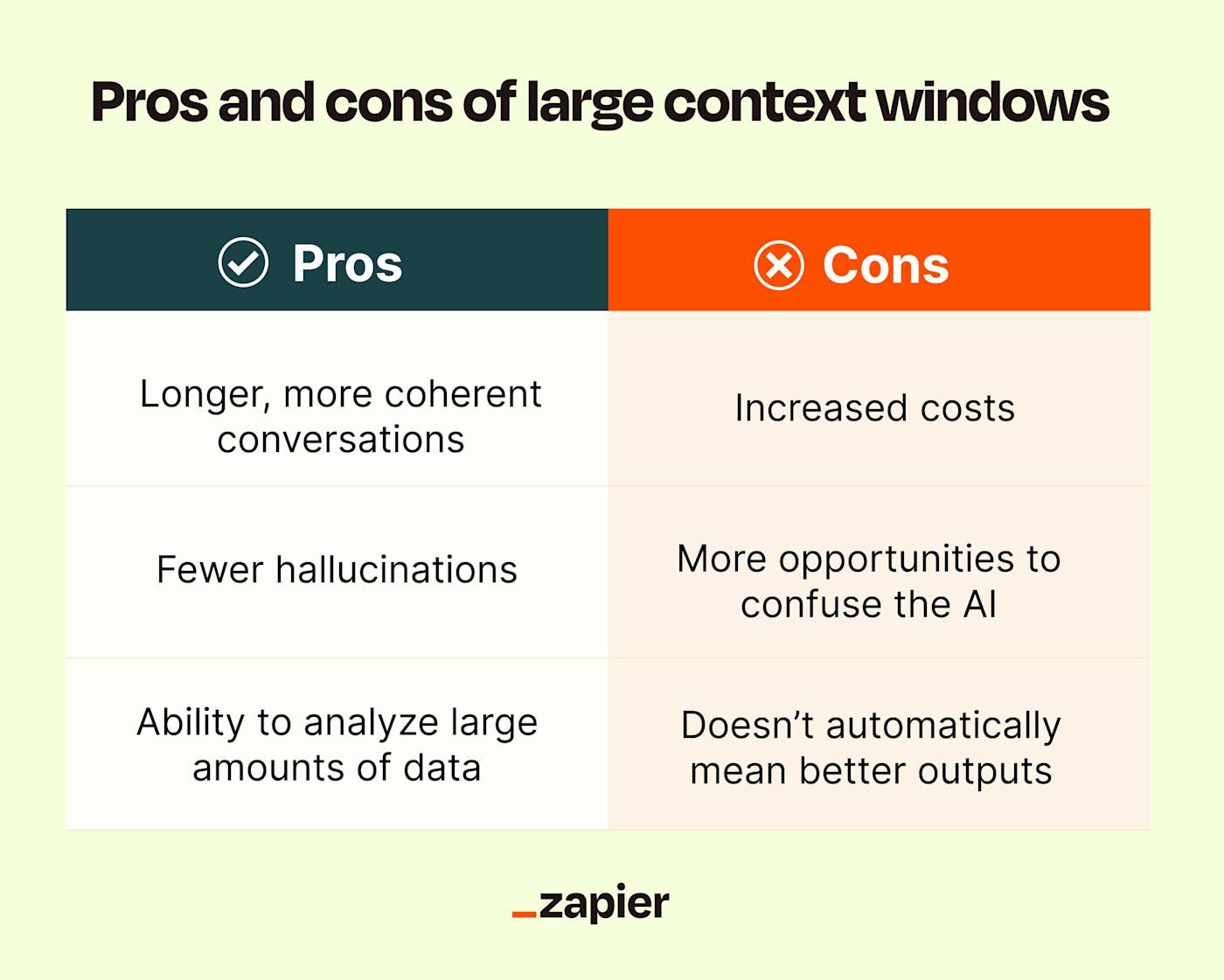

The pros and cons of a large context window in AI

While a longer context window is a major development, it’s not without its downsides. Here are the pros and cons of a large context window in LLMs.

The pros of a large context window in AI

Longer, more coherent conversations

The most obvious pro of a larger context window is that you can give the AI model more information to work with. You can feed it more specific requests, have longer conversations without the chatbot forgetting previous details, and count on it to generate a response based on more detailed information.

Let’s say you’re striking up a conversation with ChatGPT. With a 128,000 context window, this means you can chat with it almost indefinitely and it’ll still be able to recall the instructions you gave it in your initial prompt. For AI productivity tools like AI meeting assistants to truly help you be more productive, this is the kind of memory they’ll need.

Fewer hallucinations

Let’s say you’re building an internal IT chatbot. A larger context window allows you to encode your database with all the context you think the LLM could conceivably need to answer typical requests. This includes things like employee manuals, help docs, and example conversations. When someone asks the chatbot a question—for example, “How do I log in to my email account on my personal smartphone?”—the chatbot searches through the database for relevant context and examples, and automatically bakes them into the prompt.

This technique—known as retrieval-augmented-generation (RAG)—increases the chances that the chatbot will provide an accurate response and drastically reduces the chance of it making up something or telling users how to log in to Gmail.

Analyze large amounts of data

The other exciting thing about a longer context length is that it allows AI models to parse longer documents and large codebases. So if you want to feed the AI that 500-page novel and find out how many times two specific characters interact in a book, a large context window makes that possible.

The cons of a large context window in AI

Increased costs

The requirements to process AI prompts scale quadratically with token length. For example, it takes four times as much computing resources to process 2,000 tokens as it does 1,000. This means not only will it take longer to output a response, but it’ll also cost you more.

Let’s continue looking at ChatGPT: the GPT-4o model costs $5 per million input tokens and $15 per million output tokens (the input and output price is cut in half if you go with batch API pricing). One million tokens may sound like a lot, but depending on the amount of context the model has to process with each request, things quickly add up.

More context doesn’t necessarily mean better outputs

A large context window solves some data retrieval and accuracy issues with LLMs, but not all of them. If you provide an LLM with lots of low-quality data, it’s not going to magically pull out the one or two relevant points on its own. You still need to feed it with high-quality context and follow all the principles of writing an effective prompt.

Hidden opportunities to still hallucinate

While a large context window can reduce the chances of hallucinations, if you’re not careful, adding more context can actually create more opportunities for inaccuracy. For example, if you feed it conflicting information, there’s a good chance it’ll spit out inconsistent responses. And if that conflicting information is buried deep within textbook-length knowledge sources, you’ll be hard-pressed to find the source of the problem to fix it.

Is a large context window a good thing?

If doubling the length of your prompt adds lots of additional information that’s going to help the LLM give you a better answer, then awesome. But if you’re throwing in lots of irrelevant extra references just because you can, you’re ultimately slowing things down. And, if you’re using an API, you’re washing money down the drain.

The key here is to find the right balance, especially with smaller AI models running on devices like smartphones and laptops.

Automate AI with Zapier

Now that you have a better understanding of context windows—especially the importance of balancing quality over quantity—connect your AI-powered tools with Zapier, and use that knowledge to build and automate smarter workflows. Here are a few ideas to get you started.

Zapier is the leader in workflow automation—integrating with 6,000+ apps from partners like Google, Salesforce, and Microsoft. Use interfaces, data tables, and logic to build secure, automated systems for your business-critical workflows across your organization’s technology stack. Learn more.

Context for all

LLMs are continuing to get more powerful and useful. Until about a year ago, the big number that every AI company was touting was the number of parameters their models had. Now that all the major models have enough parameters for high-level performance, context length appears to be the next thing to brag about. Expect this to continue for a year or two until every model has a context window of one million. Then we’ll be onto the next thing—probably speed and efficiency.

Related reading: