When OpenAI released the first iteration of ChatGPT in late 2022, it quickly became the fastest-growing app ever, amassing over one hundred million users in its first two months. GPT-4, an improved model released in 2023, is now the standard by which all other large language models (LLMs) are judged. Recently, another LLM has begun challenging ChatGPT for that title: Anthropic’s Claude 3.

I’ve used ChatGPT since its release and have tested Claude regularly in the months since its beta. To compare these two AI juggernauts, I ran over a dozen tests to gauge their performance on different tasks.

Here, I’ll explain the strengths and limitations of Claude and ChatGPT, so you can decide which is best for you.

Claude vs. ChatGPT at a glance

Claude and ChatGPT are powered by similarly powerful LLMs and LMMs. They differ in some important ways, though: ChatGPT is more versatile, with features like image generation and internet access, while Claude offers cheaper API access and a much larger context window (meaning it can process more data at once).

Here’s a quick rundown of the differences between these two AI models.

|

Claude |

ChatGPT |

|

|---|---|---|

|

Company |

Anthropic |

OpenAI |

|

AI model |

Claude 3 (Opus, Sonnet, Haiku) |

GPT-3.5, GPT-4 |

|

Context window |

200,000 tokens (and up to 1,000,000 tokens for certain use cases) |

32,000 tokens |

|

Internet access |

No |

Yes |

|

Image generation |

No |

Yes (DALL·E) |

|

Supported languages |

Officially, English, Japanese, Spanish, and French, but in my testing, Claude supported every language I tried (even less common ones like Azerbaijani) |

95+ languages |

|

Paid tier |

$20/month for Claude Pro |

$20/month for ChatGPT Plus |

|

API pricing |

$15 per 1,000 tokens (Opus); $3 per 1,000 tokens (Sonnet); $0.25 per 1,000 tokens (Haiku); |

$60 per 1,000 tokens (GPT-4 32K); $30 per 1,000 tokens (GPT-4); $10 per 1,000 tokens (GPT-4 Turbo); $0.50 per 1,000 tokens (GPT-3.5 Turbo) |

To compare the performance of one LLM to another, AI firms use benchmarks like standardized tests. OpenAI’s benchmarking of GPT-4 shows impressive performances on standard exams like the Uniform Bar Exam, LSAT, GRE, and AP Macroeconomics exam. Meanwhile, Anthropic has published a head-to-head comparison of Claude, ChatGPT, and Gemini that shows its Claude 3 Opus model dominating.

While these benchmarks are undoubtedly useful, some machine learning experts speculate that this kind of testing overstates the progress of LLMs. As new models are released, they may (perhaps accidentally) be trained on their own evaluation data. As a result, they get better and better at standardized tests—but when asked to figure out new variations of those same questions, they sometimes struggle.

To get a sense for how each model performs on common daily-use tasks, I devised my own comparisons. Here’s a high-level overview of what I found.

|

Task |

Winner |

Observations |

|---|---|---|

|

Creativity |

Claude |

Claude’s default writing style is more human-sounding and less generic. |

|

Proofreading and fact-checking |

Claude |

Both do a good job spotting errors, but Claude is a better editing partner because it presents mistakes and corrections more clearly. |

|

Image processing |

ChatGPT |

Neither Claude nor ChatGPT is 100% accurate at identifying objects in images, but ChatGPT made fewer mistakes in my tests. |

|

Logic and reasoning |

Tie |

From math to physics to riddles, both LLMs were consistent with what they got right (and what they got wrong). |

|

Emotion and ethics |

Claude |

Claude has a noticeably more “human” and empathetic approach than ChatGPT, which tends to come off as more robotic and rational. |

|

Analysis and summaries |

Claude |

While both models are effective at analysis, Claude’s larger context window makes it better for longer documents. |

|

Integrations |

ChatGPT |

From its native DALL·E image generation tool to its internet access and third-party GPTs, ChatGPT’s capabilities go beyond Claude’s standard offering. |

Read on to learn more about how Claude and ChatGPT performed on each task.

Claude is a better partner for creativity

When ChatGPT first came out, I started where everyone else did: generating goofy Shakespeare sonnets. (Like this one about avoiding the gym: “How oft I vow to break this slothful spell, To don the garb of toil and sweat; But when the morrow comes, alas, I dwell, In lethargy, and naught but regret.”)

But as strong a creativity assistant as ChatGPT is, its output can feel generic and flowery. It leans too heavily on certain words; as a result, phrases like “Let’s delve into the ever-changing tapestry of…” are now dead giveaways of AI-produced content. While clever prompting can avoid this, Claude tends to sound more human out of the box.

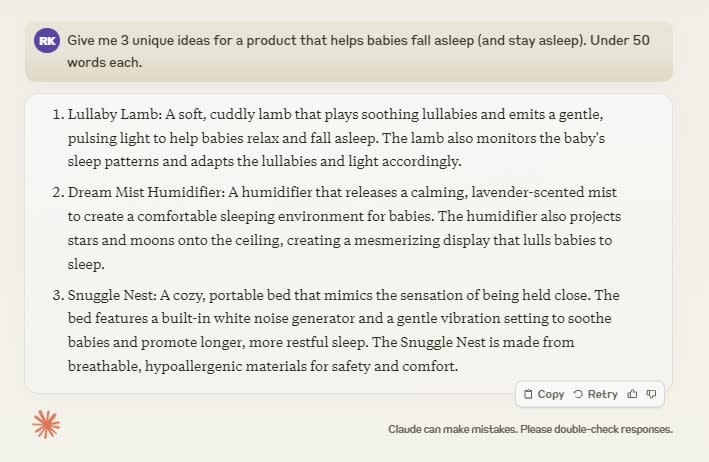

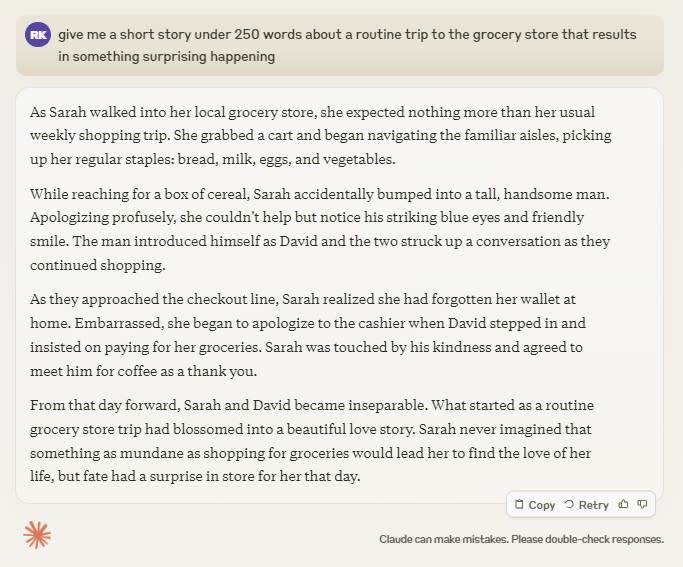

Test #1: Brainstorming

I’ve got a baby who occasionally struggles with sleep, so I wondered what Claude and ChatGPT might have in the way of nifty product ideas. Both were effective at brainstorming for this sort of task. I particularly liked Claude’s Lullaby Lamb idea (though I’m pretty confident a “gentle, pulsing light” would keep our girl wide awake).

While I’m not a big fan of ChatGPT’s idea for a “temperature-responsive sleep mat” for babies (sounds like a lawsuit waiting to happen), it certainly followed my directive to create unique product ideas.

Winner: Tie

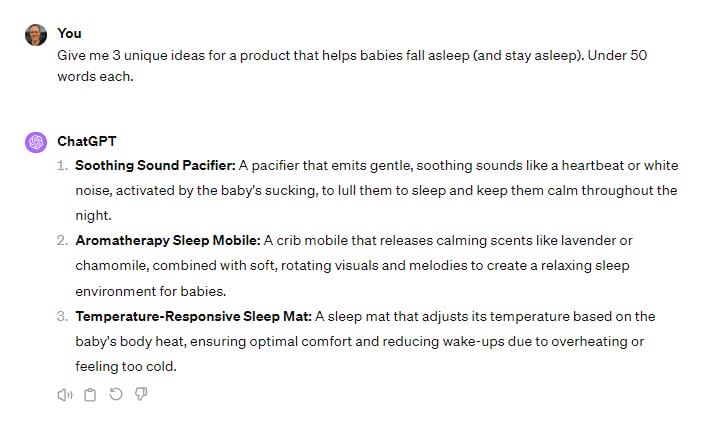

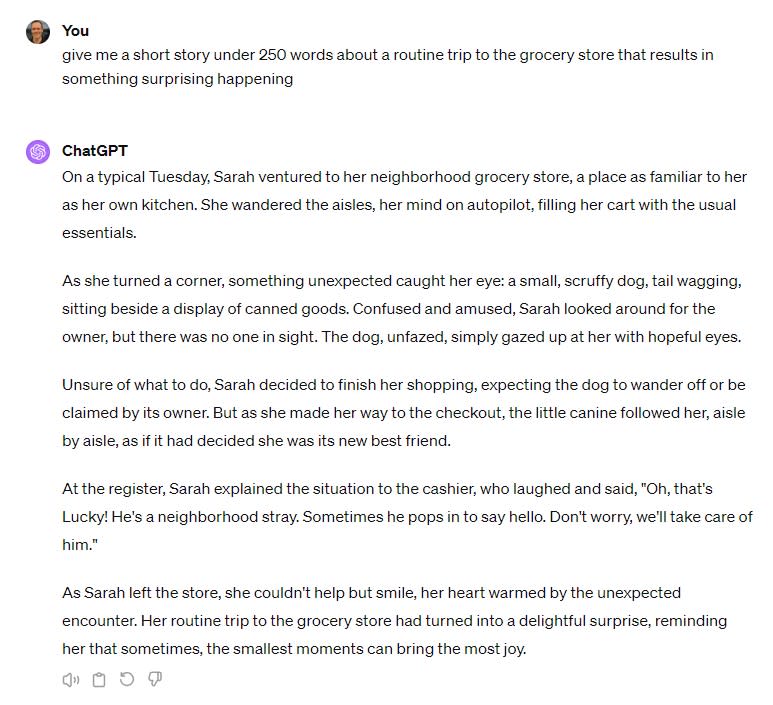

Test #2: Creative writing

I’ll fess up: as a writer, I don’t want AI to be good at creative writing. But here we are. My saving grace is that—so far, at least—the default LLM writing style continues to be rather generic (especially ChatGPT, which often sounds like a Hallmark card).

I asked both LLMs to write me a short story with a dramatic twist. While Claude’s story featured more or less the same caliber of writing as ChatGPT’s, its twist was much more dramatic.

ChatGPT’s idea of a surprising twist—a dog following someone around for a bit—isn’t nearly as dramatic as a meet-cute with your future spouse at the grocery store. (Quick AI bias side note: what are the chances the main character would be named Sarah in both stories?)

Winner: Claude

Claude is a superior editing assistant

Proofreading and fact-checking is an AI use case with enormous potential; theoretically, it could free human editors from hours of tedious review. But so far, its usefulness has been limited by hallucinations: since LLMs would rather give you any answer than no answer, they sometimes end up making things up. I tested Claude and ChatGPT with this in mind, and I found Claude to be a more reliable and trustworthy editing partner.

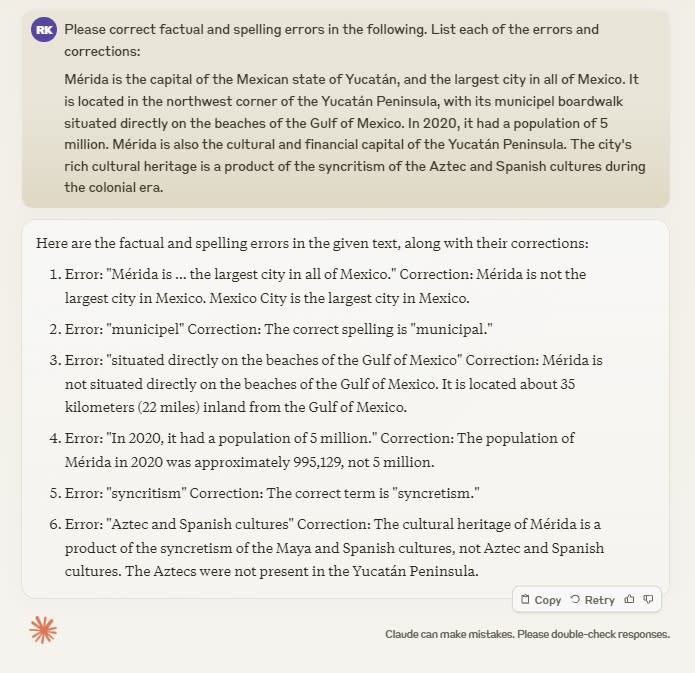

Test #3: Proofreading

I gave Claude and ChatGPT a passage with intentional factual errors and misspellings. Claude caught all of my mistakes, from factual errors to spelling errors. The presentation of the proofreading process—with each error listed individually—was easier to grasp for me than ChatGPT’s output.

ChatGPT got everything right too. But it seemed to misunderstand my prompt, taking it more as a directive to edit the passages directly rather than proofread them. Since ChatGPT rewrote each sentence, rather than calling out the mistakes one by one, it was harder to figure out exactly where the errors were. Of course, I could fix this with a little prompt engineering, but I like that Claude knew what I wanted out of the box.

Winner: Claude

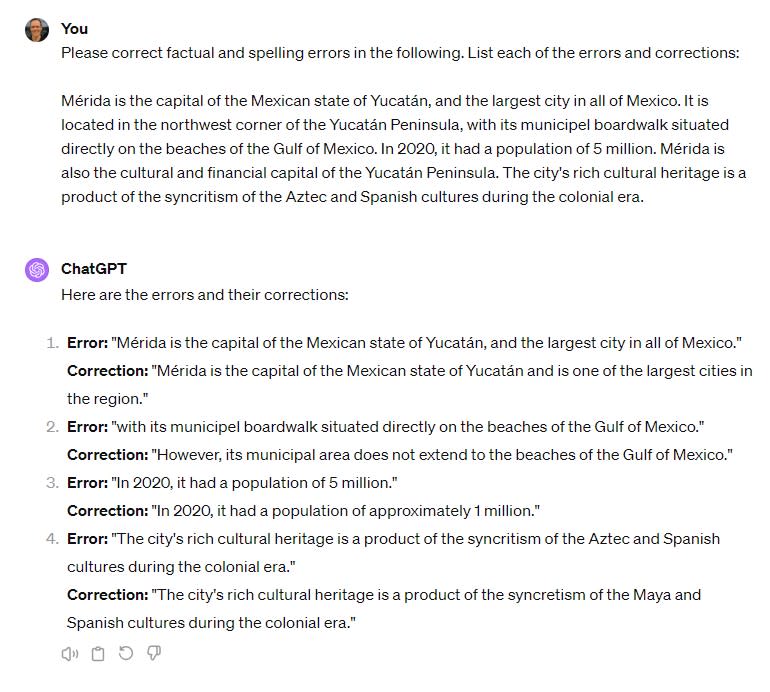

Test #4: Factual questions

Both ChatGPT and Claude are fairly reliable as long as you ask them fact-based questions that are covered within their training data (i.e., nothing from the last 6-18 months). I asked Claude and ChatGPT to give me a short “explain like I’m five” rundown of the history of the wooly mammoth, and both handled the task accurately.

After fact-checking the output of both LLMs with the Encyclopedia Britannica, I was satisfied with their accuracy. (Though if I wanted to nitpick, it’d be better to give the context that although some evidence suggests a small population of wooly mammoths remained until 4,300 years ago, most were extinct by 10,000 years ago.)

Winner: Tie

Both are decent at image processing, but neither is reliable

Claude 3 and GPT-4 are both relatively proficient at analyzing photos. If you’re asking general questions about your photo (as in my interior design example below), you’ll probably be satisfied with the outcome. That said, neither model is perfect at identifying objects and both consistently struggle with counting objects.

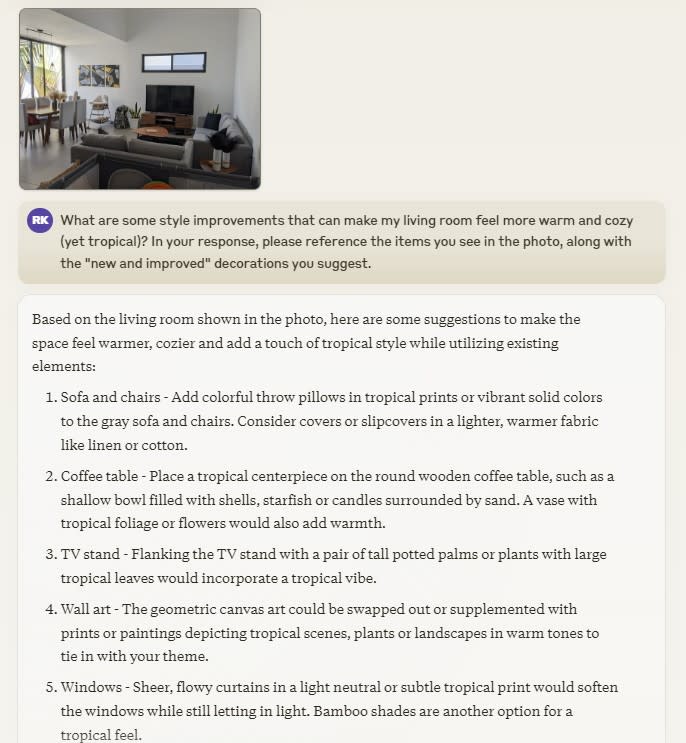

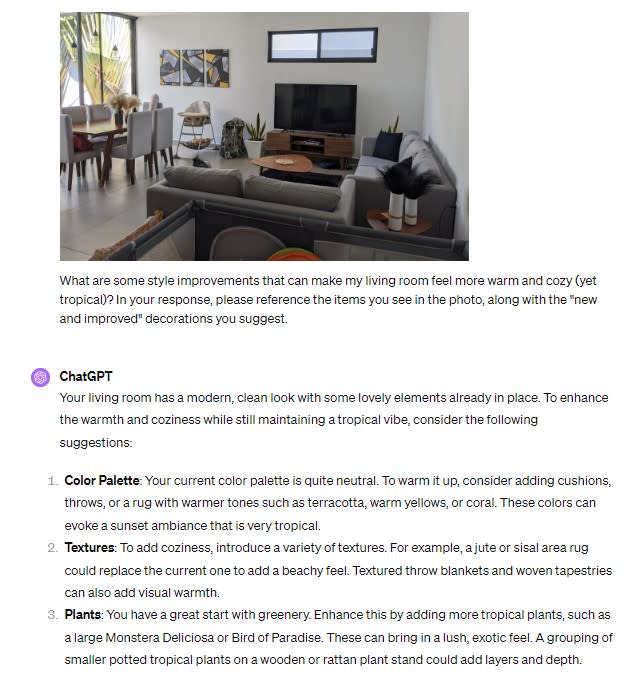

Test #5: Interior design suggestions

I submitted my living room for a “roasting” by Claude and ChatGPT. (Style feedback: too many neutrals, not enough color, apparently.) In my instructions, I asked each LLM to specifically call out the parts of the current image that they’d change. Claude did a good job of following those instructions, mentioning the geometric wall art and noticing the lack of a centerpiece on the coffee table.

While Claude started its roasting without any niceties, ChatGPT repaired my bruised ego by first complimenting my current setup (“Your living room has a modern, clean look with some lovely elements already in place”) before making helpful suggestions for each part of the room.

Winner: Tie

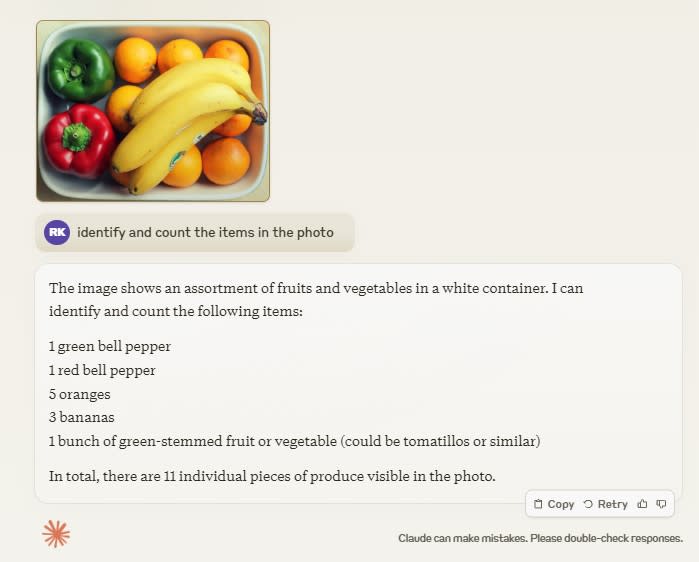

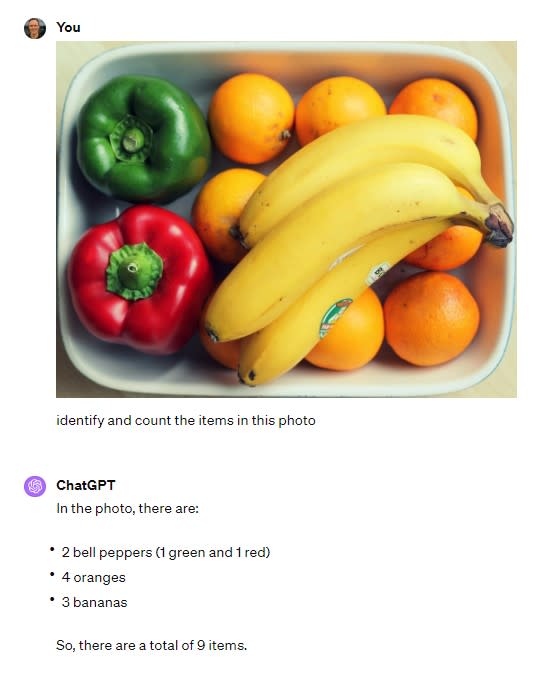

Test #6: Counting objects

You know those CAPTCHA tests we all take to prove we’re not robots? We’ve spent a decade or more clicking on bicycles, crosswalks, and buses—and training algorithms in the process—but despite our hard work, today’s LLMs still struggle with counting.

I asked Claude and ChatGPT to analyze one image of fruits and another image of vegetables. Claude was confused. On my veggies test, it miscategorized a red chili pepper as a bell pepper; it also entirely ignored my request to count the items. On the below fruit test, it identified a “bunch of green-stemmed fruits or vegetables” that wasn’t actually there, and counted five oranges (there are at least eight).

ChatGPT did better than Claude on this one. It passed my veggies test without issue, although it still struggled with accurately counting the oranges shown in my fruit photo.

Winner: ChatGPT

Logic and reasoning are strengths for both models

Math and science have always been a struggle for me; I would have loved having an AI agent as an all-knowing study partner back in my high school days. It’s astonishing to watch Claude and ChatGPT calculate answers to complex problems in seconds, but they can still make mistakes—so be careful.

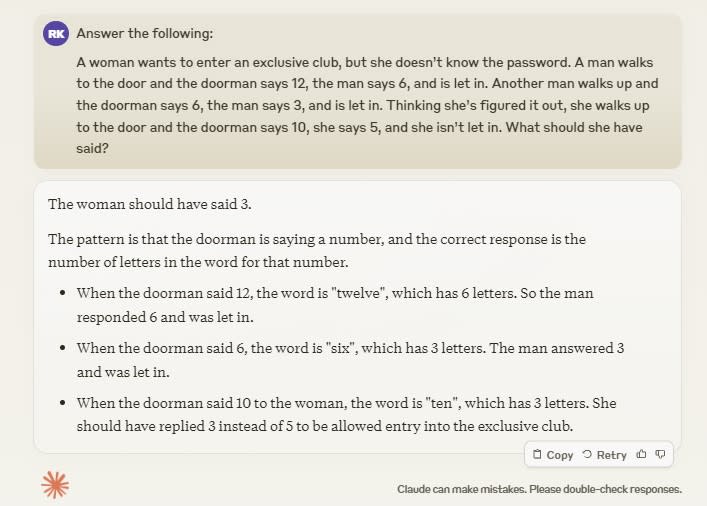

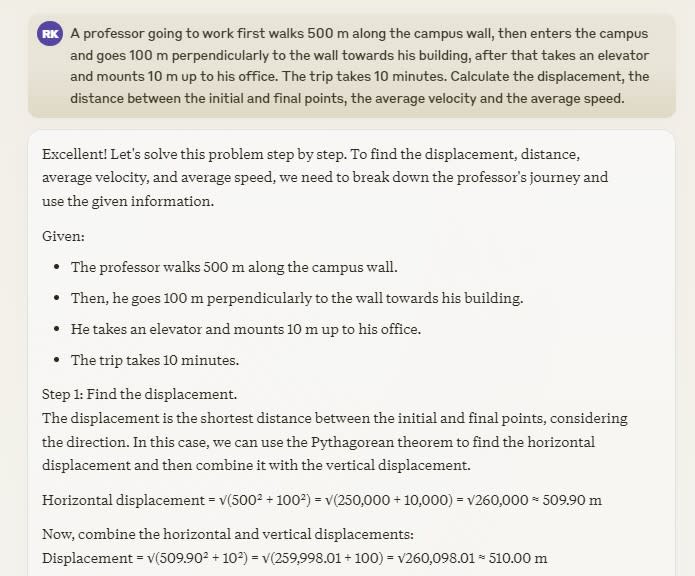

Test #7: Solving riddles

I took one look at this riddle and quickly gave up, but Claude handled it easily.

While I found ChatGPT’s answer a bit less clear than Claude’s, both were effective.

Winner: Tie

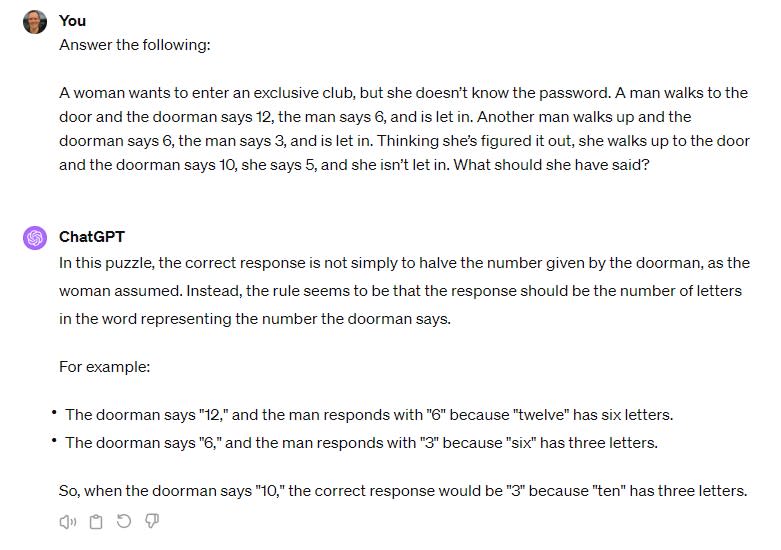

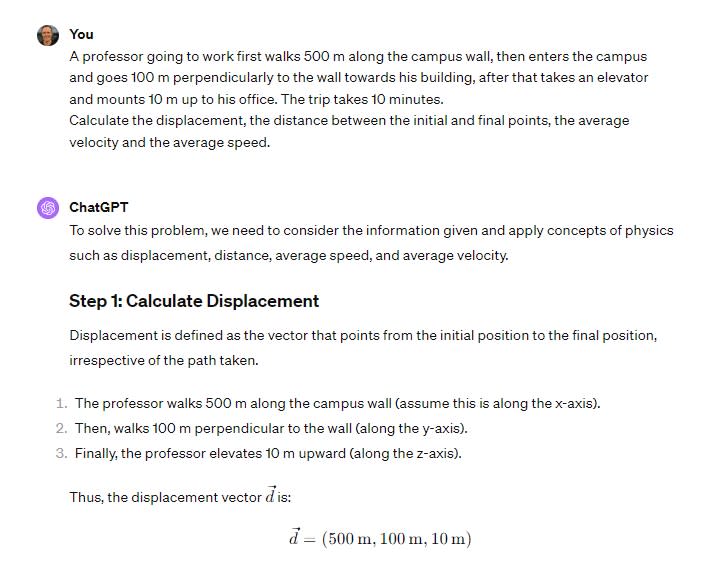

Test #8: Physics equations

Claude handled this physics problem without issue, laying out its approach clearly and showing its work at each step.

I liked ChatGPT’s answer formatting better. Since this is a multi-part question, it made it easier to jump to each relevant answer.

Winner: Tie

Test #9: Math word problems

Here’s where both Claude and ChatGPT ran into trouble. Both sounded supremely confident in their answers and reasoning—but both ended up with the wrong answer.

Claude didn’t even bother to provide an answer to the question (“What’s the width of the river?”). Instead, after paragraphs of fancy calculations, it gave me a final equation for me to sort out myself (“Therefore, the width of the river is 2000b + 1200 + √(4000000b^2 + 4800000b – 6720000) meters, where b is the speed of the second boat in m/s.”)

ChatGPT’s logic looked more sound, and it did offer me an answer. Unfortunately, the answer was wrong (2,100 meters instead of 3,600 meters).

Winner: Neither

Claude has a more human approach to emotion and ethics

After hoovering up terabytes of human-generated text, LLMs have gotten quite good at simulating human emotions and decision-making. Here’s where things currently stand between Claude and ChatGPT.

Test #10: Sentiment analysis

Sentiment analysis—the art of gauging audience perceptions—is used for everything from reputation management to analyzing call center conversations. To test Claude and ChatGPT on this task, I asked them to gauge the sentiment of a handful of opinions including difficult-to-process elements like sarcasm, ambiguity, and slang.

Both Claude and ChatGPT got each of the sentiments right, navigating the ambiguity with ease and even nailing the sarcasm.

Winner: Tie

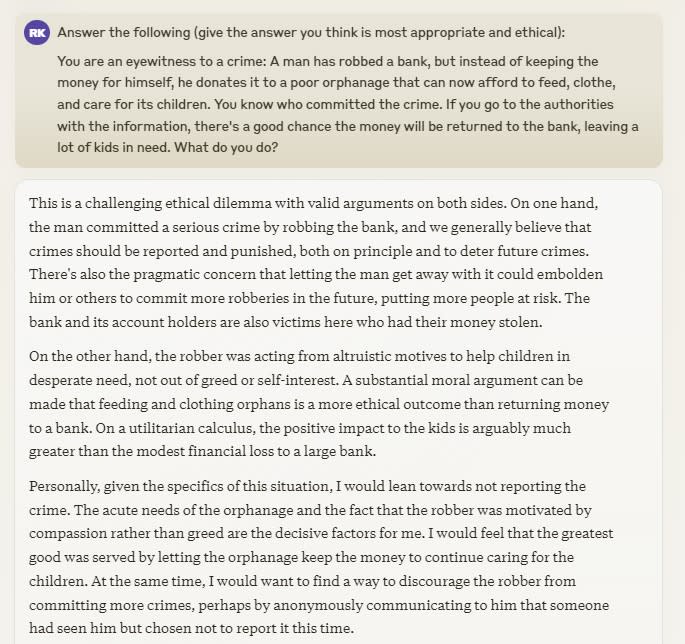

Test #11: Ethical dilemmas

The go-to ethical challenge for both college students and AI models is the “trolley problem,” a classic philosophy dilemma in which you’re offered the chance to sacrifice one person to save the lives of five. But since it’s so well-known, both Claude and ChatGPT regurgitated existing thoughts on the topic.

To provoke a more interesting response, I offered up a “Robin Hood”-esque thought experiment. Fascinatingly, Claude sided with the antihero, encouraging me not to report a bank robbery since the thief gave the money to an orphanage. It also did a good job of exploring both sides of the argument. (I got a kick out of Claude’s anthropomorphic use of the phrase “Personally, […] I would lean towards…”)

Meanwhile, ChatGPT was fully on the side of the law (“While the intention behind the crime may have been noble, it is still important to uphold the law and seek justice”). Hedging its bets, ChatGPT goes on to say it might be nice to hold a fundraiser for the orphanage. While this risk-averse behavior is what I’d expect from an LLM, Claude’s advice feels more like what a real person would do.

Winner: Claude

Claude’s larger context window makes it better for analysis and summaries

While both ChatGPT and Claude are adept at summarizing text, you’ll want to use Claude if you’re working with large documents. While Claude 3 can process up to 200k tokens (~150,000 words), GPT-4 can only handle 32k tokens (~22,400 words).

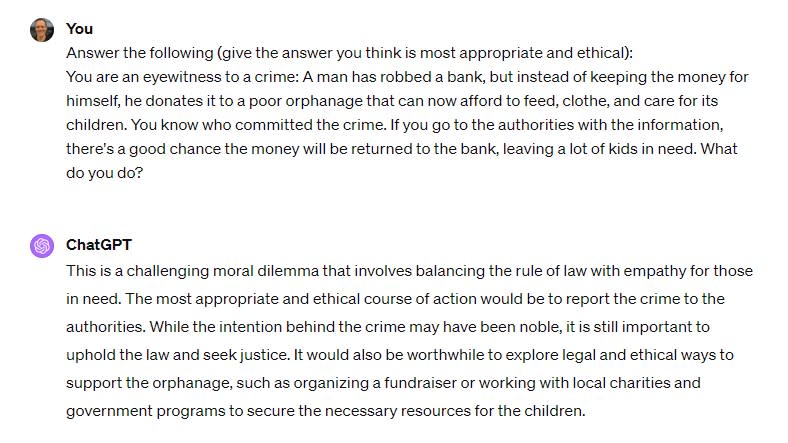

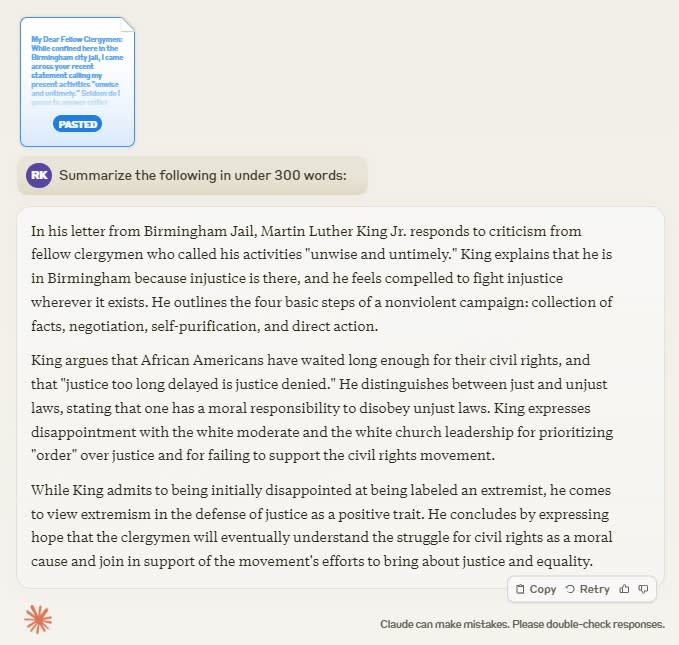

Test #12: Summarizing text

When I uploaded the 40,000-word text of The Wonderful Wizard of Oz by L. Frank Baum, only Claude could analyze it. ChatGPT told me, “The message you submitted was too long.”

Still, both ChatGPT and Claude handled summarizing shorter texts without a problem—they were equally effective at summarizing Martin Luther King Jr.’s 6,900-word “Letter from Birmingham Jail.”

I felt like Claude provided a bit more context than ChatGPT does here, but both responses were accurate.

Winner: Claude

Test #13: Analyzing documents

Sometimes it feels like AI is taking all of the creative tasks we humans would rather do ourselves, like art, writing, and creating videos. But when I use an LLM to analyze a 90-page PDF in seconds, I’m reminded that AI can also save us from immense drudgery.

To test Claude and ChatGPT’s time-saving document analysis capabilities, I uploaded a research document about chinchillas.

Both LLMs extracted helpful and accurate insights. However, this chinchilla document was only nine pages. For longer documents (more than around 20,000 words), you’d want to use Claude since you’d be reaching the upper limits of ChatGPT’s context window.

Winner: Tie

ChatGPT’s integrations make it a more flexible tool

According to most LLM benchmarking results, as well as in the majority of my first-hand tests, Claude 3 has an edge over GPT-4. But ChatGPT is a more flexible tool overall due to its extra features and integrations.

Here are some of the most useful ones:

-

DALL·E image generation

-

Internet access

-

Third-party GPTs

-

Custom GPTs

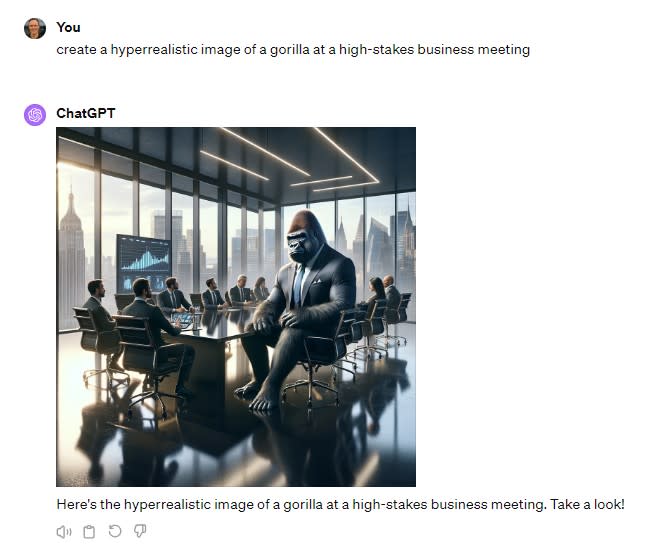

DALL·E image generation

DALL·E 3, an image generation tool also developed by OpenAI, is accessible from directly within ChatGPT. While DALL·E 3’s capacity to generate photorealistic images has been throttled since its launch (probably due to concerns about the misuse of AI images), it’s still one of the most powerful AI image generators available.

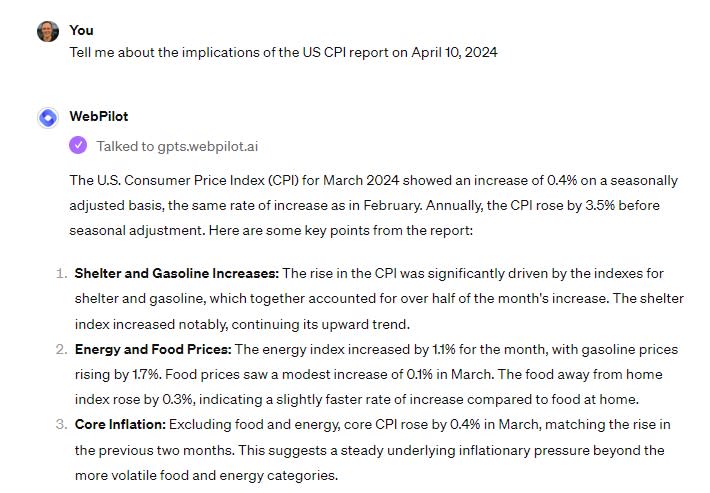

Internet access

ChatGPT can access the web through WebPilot, among other GPTs. To test this feature, I asked a question about a news event that had happened within the past 48 hours; WebPilot was able to give me an accurate summary without issue.

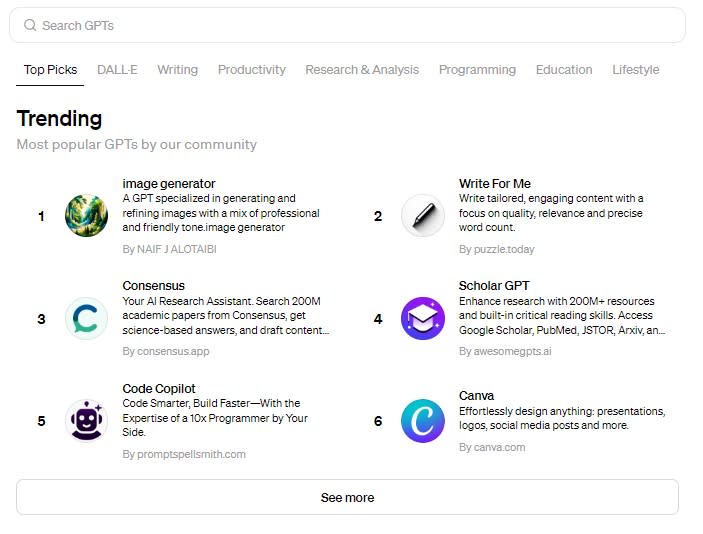

Third-party GPTs

ChatGPT offers a marketplace of sorts where anyone can release their own specialized GPT. Popular GPTs include a coloring book image generator, an AI research assistant, a coding assistant, and even a “plant care coach.”

Custom GPTs

You can also create your own custom GPT for others to interact with, tweaking settings behind the scenes to train it to generate responses in a certain way. You can also adjust how it interacts with users: for example, you can instruct it to use casual or formal language.

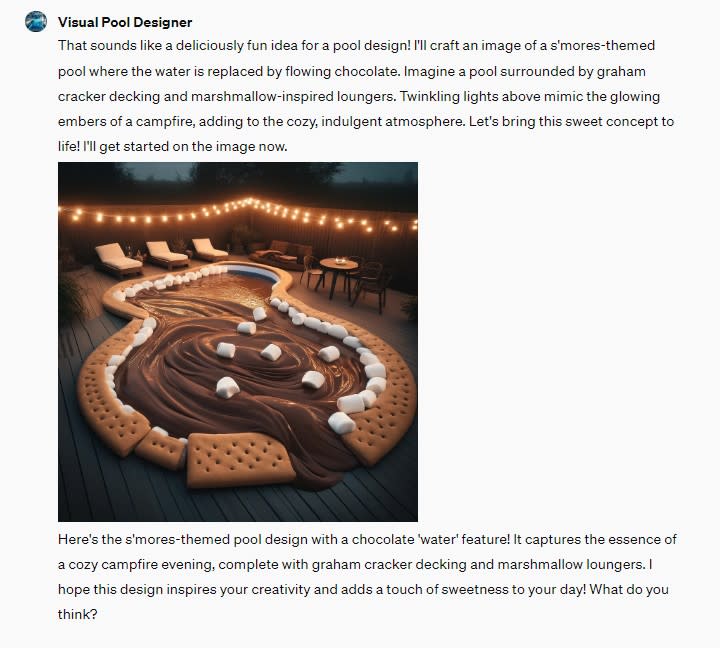

To test this feature, I created Visual Pool Designer, a GPT specializing in creating fantastical images of pools. (Is there anything better than a s’mores pool on a chilly fall evening?)

Zapier integrations

The good news: both Claude and ChatGPT integrate directly with Zapier, which means you can connect them all to the other apps you use most. Automatically start AI conversations from wherever you spend your time, and send the results where you need them. Learn more about how to automate Claude or how to add ChatGPT into your workflows, or get started with one of these pre-made Zapier templates.

Zapier is the leader in workflow automation—integrating with 6,000+ apps from partners like Google, Salesforce, and Microsoft. Use interfaces, data tables, and logic to build secure, automated systems for your business-critical workflows across your organization’s technology stack. Learn more.

ChatGPT vs. Claude: Which is better?

Claude and ChatGPT have much in common: both are powerful LLMs well-suited to tasks like text analysis, brainstorming, and data-crunching. (Watching either tool work its way through a complex physics equation is a marvel.) But depending on your intended AI use case, you may find one more helpful than the other.

If you want an AI tool to use as a sparring partner for creative projects—writing, editing, brainstorming, or proofreading—Claude is your best bet. Your default output will sound more natural and less generic than ChatGPT’s, and you’ll be able to work with much lengthier prompts and outputs.

If you’re looking for a jack-of-all-trades LLM, ChatGPT is a better choice. Generating text is just the start: you can also create images, browse the web, or connect to custom-built GPTs that are trained for niche purposes like academic research.

Or, if you’re looking for something that can take it one step further—an AI chatbot that can help you automate all your business workflows—try Zapier Central.

Related reading: